CripTech AI Lab presentations this Friday

Introducing Dr. Fifi Robinson, inventor of the Intuitive Imaging Instrument!

This Friday, October 24th, I’ll be presenting my work-in-progress for Leonardo’s CripTech AI Lab. In case it’s not on your radar, Leonardo is a nonprofit network that operates “at the nexus of arts, science and technology.” It was founded in 1968 as a journal by Frank Malina, an American in Paris who was both an aerospace engineer and a visual artist (I’ve been eager to get my paws on this new monograph). In a cultural landscape marked by the “Two Cultures,” a term coined by the British chemist/novelist C.P. Snow in the late ‘50s to describe how artists and scientists weren’t talking to each other, Leonardo provided a platform for publishing work at the intersection of art and science/technology that wasn’t welcome elsewhere because of its in-betweenness––the stuff we now embrace as “interdisciplinary.” Many of my favorite artists have published strange little articles in Leonardo that are equal parts aesthetic treatise, scientific paper, and alternative exhibition space. Margaret Benyon’s “Holography as an Art Medium” (1973) and Vera Molnar’s “Toward Aesthetic Guidelines for Paintings with the Aid of a Computer” (1975) are texts I go back to again and again, always finding something new.

In fall of 2019 I spent a blissful, pre-Covid month in Paris doing dissertation research. Spooky season is my favorite time of year (I’m an October Libra, after all) and unfortunately France has a very boring take on Halloween. L’Halloween, c’est pour les enfants, I’ve been told repeatedly, scathingly, though I did go on a cute first date that October 31st in a bar decorated with cardboard rats and bats. The city makes up for its lack of Sunnydale vibes with its autumnal leaves, gorgeous cemeteries, and the perfect weather for wearing a duster coat à la Buffy Summers, which I could never do in Chicago, the city of no transitional seasons. Fall is when I studied abroad in Paris during college so it fills me with embarrassing amounts of nostalgia. So imagine me with the tails of my lavender duster billowing as I ascended from the métro at Boulogne-Bilancourt (the same stop where I’d go for my hypermobility diagnosis two years later) to visit the home of the late Frank Malina.

The Leonardo archive was, at least at that time, housed in Malina’s old garden shed, which looked like a picturesque little ski chalet and was filled to the brim with disintegrating plastic bins full of yellowed documents. Sadly, I never found what I was looking for: the original manuscript Vera Molnar had submitted in 1974. I later found Malina’s annotations on Molnar’s manuscript in her personal archive, so I kind of scratched that itch. But I still wish I’d gotten lucky and found it on my first try. With Covid and everything happening soon after, I never went back to the Boulogne house.

All of this is to say, I’ve been a Leonardo fangirl for quite some time. A couple years ago, I read about their CripTech Incubator when it first launched as an art and technology fellowship for disability innovation, and when I moved to SoCal two years ago I saw the exhibition “Experiments in Art, Access and Technology (E.A.A.T.),” curated by Vanessa Chang and Lindsey Felt as the culmination of the first round of fellowships. It was held at the Beall Center for Art + Technology at UC Irvine, which had previously hosted Molnar’s first solo show in the U.S. (which I wrote about for HOLO), so that was a nice confluence. The show was amazing and is archived here.

When the CripTech incubator announced that its 2025 focus would be artificial intelligence, I knew I needed to be a part of it. The Lead Artist for the AI Lab is M. Eilo, who makes very funny and smart work. Sometimes I still can’t believe I was selected for the 2025 cohort. This is the first artist residency I’ve done in a long time, since the analog holography workshop I did through the HoloCenter in 2016. After years of studying and working as an art historian, it feels good to be back to my first love, which is making art.

I wanted to use this newsletter to introduce the video I’ve been working on and to think through how to present a 10-minute video in a five-minute time frame. Ultimately, the fellowship will culminate in a virtual exhibition called “Slow AI,” which will launch in early December and will be hosted indefinitely on Leonardo’s website, as long as the internet and planet Earth shall live.

The working title is Feeling is Believing, and the topic is closely related to the comics I’ve been publishing here on High Functioning—namely, the challenges of seeking diagnoses for chronic pain and illness when your symptoms aren’t visible. It’s a sort of ‘90s infomercial for a fantasy medical imaging instrument called the Intuitive Imaging Instrument, the III (pronounced triple-eye). I play a character named Dr. Fifi Robinson, a professional of unknown profession in a white lab coat with big hair and even bigger glasses. She is an entrepreneur from some sort of time warp, in which teased bangs, agentic AI, toll-free numbers, and pitch decks coexist.

I texted this gif to friends with the disclaimer “this is not a p0rn0.” I posted it to IG without the same disclaimer, so who knows if minds are running wild out there. In fact, this scene is not currently in the video, though it does give you a good idea of the aesthetic. I filmed the video using zero AI, on my parents’ old Sony Handycam camcorder (c.2005), using real props that I’ve been collecting—pink exam gloves stolen from my gynecologist’s office, real x-rays from the ‘90s bought on eBay, and a vintage light box I got from this place in Chino (ew!) that I found on FB Marketplace.

So what does this have to do with AI? Well, a lot of my b-roll footage is AI-generated: black-and-white “before” scenes of people rubbing their necks and eyes in pain and in slow motion. I ran those through a free app, ntsc-rs, that gives any video footage the look of a VHS tape. I tried an AI video editor called Descript that my partner, Matt, swears by, but when I used their “Eye Contact” effect, which “adjust[s] your gaze in video so it appears you’re looking right at the camera,” it changed my eye color and made me look like a demon, so I had to ask for my money back. I’ll save that one for a future project on neurodivergence…

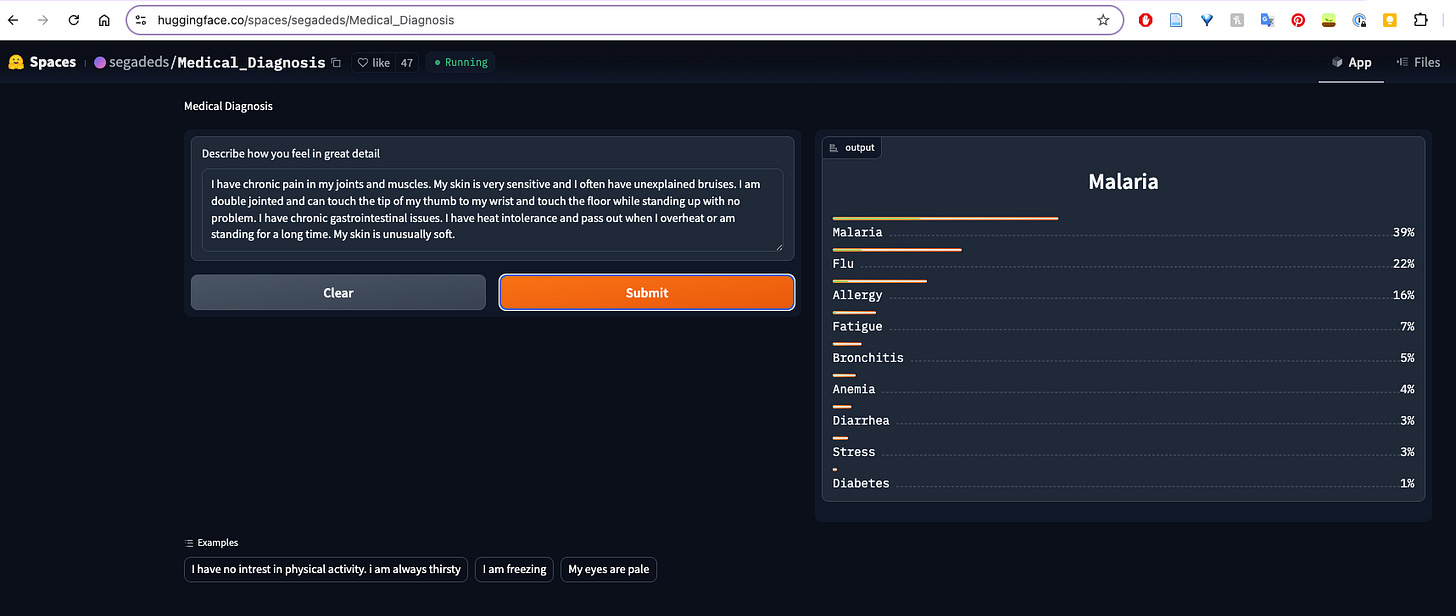

At the root of the project, however, are questions about the truth value of both medical imaging and AI-driven diagnostic tools. There’s so much to unpack about people using AI for therapy (have you listened to journalist Evan Ratliff’s podcast “Shell Game”?). But it’s kind of alarming how many people turn to AI for self-diagnosis the way they used to use Google, WebMD, or Reddit, not to mention how many doctors are encouraged to use AI to help them interpret results and make diagnoses. I don’t know how much these tools are actually working… but the bunk ones consistently predict I have either malaria or the common cold.

I’ve also spent a lot of time, as a patient, wishing that my pain/illness showed up in the diagnostic imaging that my doctors ordered over the years––the X-rays, the MRIs, the ultrasounds that all came back looking “normal.” Western medicine relies so heavily on visual evidence for diagnosis. So when something isn’t visible, when there’s no apparent fracture or fibroid or tumor, doctors often conclude that your pain is in your head––that it’s the result of a mental illness, rather than a physical one.

I wanted to create a tool that somehow captured the feeling of chronic pain in an image that maybe wasn’t medically useful, but that was validating. I started experimenting with different text-to-image models to varying degrees of success. I so badly wanted to run AI models locally––that is, off the cloud, using a lot less energy and without AI companies siphoning off my intellectual property. But my laptop was simply not powerful enough to handle local models, so I was stuck giving my money to commercial tools—namely, Midjourney, which feels very much like a slot machine.

I did a lot of research on aura photography, which is often marketed as “therapeutic” and is even offered at alternative medicine clinics. Aura cameras were invented by a guy named Guy Coggins right here in Southern California. He combined elements of Kirlian photography with instant film, biosensors, and a proprietary algorithm that converts biofeedback into spectral color. A double exposure overlays the aura color field onto a portrait of the subject.

I collected dozens of scanned aura photographs; lucky for me, they are well-loved and documented by millennials. My gen-Z students had never heard of them and showed little interest in the concept, maybe because they grew up online amidst post-truth, doctored images so they don’t believe anything they see…. I then made a Midjourney “moodboard” from my collection of aura photos with the subjects removed. I gave the model images of x-rays along with carefully crafted text prompts and asked it to create something in-between an x-ray, a Bauhaus photogram, and an aura photograph, until I eventually got some images I liked.

The next step was planning what to do with these images. How was I going to design a tool that generated these for other people? I quickly realized that I am not a maker of tools. I am not an engineer. I am not a designer. I just don’t think like that. I am a user and creative misuser of tools. So for the purpose of this incubator, I decided to focus on the fantasy of this imaging device, and how it might be sold to people like me, who have struggled to be heard, understood, listened to, and validated.

With a partner who has a foot in the world of startups, I’ve been a fly on the wall at “pitch parties,” where entrepreneurs give 5-minute presentations of their business plans using “slide decks,” which I, an out-of-touch academic, would simply call a powerpoint. In California, you see a lot of New Age, woo-woo culture intersecting with startup culture in the form of wellness apps and elixir subscriptions. My hero Shana Moulton and I touched on this in our conversation for Art in America––the ever-present figure of the snake oil salesman, his similarity to the Avon lady, and the allure of that one gadget or potion that will cure all your ailments.

All of this fed into the making of Dr. Fifi Robinson––my alter ego, and perhaps the doctor I would have become had I listened to my parents’ advice and gone to medical school. Squeamish me, who faints at the sight of her own blood! Dr. Fifi Robinson has a sunny Southern California disposition; she has great skin (the camcorder blurs out my acne vulgaris) and she really listens to her patients, but she is also a capitalist, reminding you that her services are not covered by insurance.

If you want to hear my “pitch” on Friday, here is the link to register. The Zoom will take place 9-11am California time; I’m up in the second half. I’m so excited about all the projects, which explore a wide range of crip topics from medical surveillance to the nonlinearity of trauma narratives, from the visualizations of hyperacusis (abnormally low tolerance to sound) to what happens when a chatbot is trained with an awareness of crip experience. The WIP presentations will be recorded and I am happy to share a link after, if you just reply to this email asking me for it.

xoxo,

Zsofifi